MusicCommentator: A Comment Generation Method based on Acoustic and Textual Features

We propose a system called MusicCommentator

that suggests possible comments

on appropriate temporal positions in a musical audio clip.

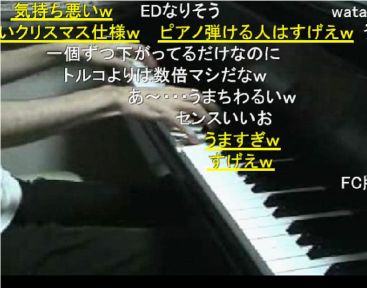

In an online video sharing service,

many users can provide free-form text comments

for temporal events occurring in clips not for entire clips.

To emulate the commenting behavior of users,

we propose a joint probabilistic model of audio signals and comments.

The system trains the model &

by using existing clips and users' comments given to those clips.

Given a new clip and some of its comments,

the model is used to estimate

what temporal positions could be commented on

and what comments could be added to those positions.

It then concatenates possible words

by taking language constraints into account.

Our experimental results showed that

using existing comments in a new clip

resulted in improved accuracy

for generating suitable comments to it.

-

Slides

Slides

-

Demo video of comment generation (124MB mpeg4)

Demo video of comment generation (124MB mpeg4)

-

Kazuyoshi Yoshii, Masataka Goto:

"MusicCommentator: Generating Comments Synchronized with Musical Audio Signals

by a Joint Probabilistic Model of Acoustic and Textual Features,"

In Proc. 8th International Conference on Entertainment Computing (ICEC),

pp. 85-97, 10/29-11/2, 2007.

Author : Kazuyoshi Yoshii (AIST)

mail to k.yoshii(at)aist.go.jp